Smartphones currently become the most pervasive computing devices of all times. They currently become even the best-selling consumer electronic devices of all. Obviously there is a huge amount of research that investigates how people use their phones and how we can improve their experience. If doing research using smartphones, an important practical question is which platform one should choose. Basically, there are three major platforms left and alive: iOS on the iPhone, Windows Phone, and Android.

Developing

Developing for Android is nice but developing for the other platforms isn’t worse. While Java might not be the most innovative language it easily beats iOS’s Objective C (garbage collection anyone?) and is almost on par with the .NET languages (and you could also use one of the other JVM languages). What makes Java compelling is the huge number of available examples but what really sticks out (for us) is that all our computer science students have to learn Java in the first semester. This means that every single, somewhat capable, student knows how to program Java that is even used throughout their university courses. It also comes in handy (actually this is already a real show stopper) that unlike developing for iOS you don’t need a Mac and unlike Windows Phone you don’t need Windows. Linux, Windows, MacOS – yes they can all be used to develop for Android (and those who like the pain can also use BSD).

Openness

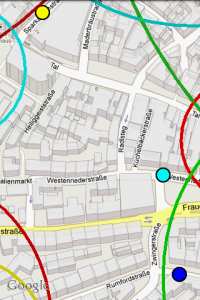

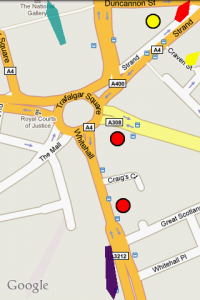

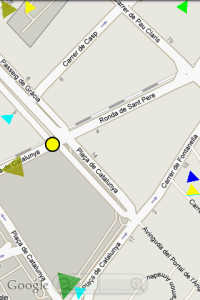

Android is free and open. Sure, it is probably free like beer and not like free speech but you can still look into the code. Being able to look into your OS’s source code might seem like an academic detail… One of my former students had to look into the Android’s sources to understand the memory management for developing commercial apps. Having the source code enabled us to understand the Android keyboard and reuse it during our studies. We even patched Android to develop handheld Augmented Reality prototypes. All this is only possible if you have the source code available. For these examples, it might not be necessary to look in the code on other platform. Still, at one point or another you might want to dig down to the hardware level and you are screwed if it isn’t Android that you have to dig through.

Deploying

While developing prototypes and conducting lab studies is nice at one point or another you might want to deploy your shiny research prototype. It might be for research, it might be for fun, or just for the money. Deploying your app in the Android market takes just seconds (if you already have those screenshots and descriptions readily available). There is no approval process. No two weeks waiting until Apple decides that your buggy prototype is – just a too buggy prototype. All you need is 25$ and a credit card (and a Google Account and a soul to sell).

Market share

Windows Phone will certainly increase its market share by some 100% soon – which isn’t difficult if you start from 0.5%. However, Android overturned all other platforms, including iOS and Blackberry. The biggest smartphone manufacturer is Samsung with their Android phones. They sell more smartphones than Nokia and they sell more smartphones than Apple. Well, and they are not the only company with an Android phone in their portfolio.

Fragmentation

Fragmentation is horrible! I developed for Windows Mobile and for JavaME. Even simple applications need to be tested on different devices to hope that it works. Things aren’t too bad for Android (if you don’t use the camera or some sensors or recent APIs or some other unimportant things…). Fragmentation can even be great for the average mobile HCI researcher. Need a device with a big screen or with a small display? Fast processor, long battery life, TV out, or NFC? There is a device for that! There are very powerful and expensive devices (the ones you will use to test your awesome interface) but also very cheap ones for less than 80€ (that you can give to your nasty students).

Usability, UX, …

Android offers the best usability of all platforms ever – well probably not. Would I buy an Android phone for my mother? If money doesn’t count I would certainly prefer an iPhone. What would I recommend to my coolish step brother? Certainly a Windows Phone to impress the girls. But what would I recommend to my students? There is nothing but Android!

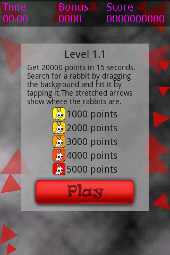

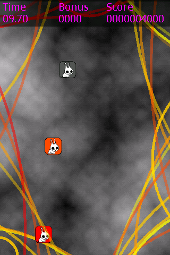

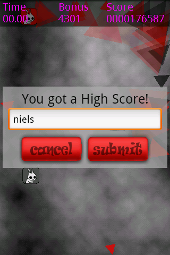

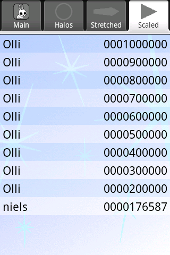

Hit the Rabbit!

Hit the Rabbit!